Challenge: Identify and ameliorate friction points in the site’s mobile navigation to help drive online sales.

Approach: Baseline the current information architecture’s performance with users through a series of assigned tasks. Generate a new IA hypothesis based on card sort exercises. Test that IA against the existing one to measure changes in performance.

6 weeks

2 weeks research planning & baseline testing

2 weeks card sort & new IA generation

2 weeks new IA testing & final analysis

Solo contributor

1 Designer/Researcher, with oversight

Key Responsibilities

Plan and drive all user research

Synthesize findings into a new IA hypothesis

Validate new IA and make final recommendations

1. IA Testing (Round One)

Full disclosure: I don’t know much about women’s athletic wear. This makes it challenging to predict how someone shopping for those items might categorize them, and customers who can’t find what they’re looking for aren’t likely to stick around your site for long.

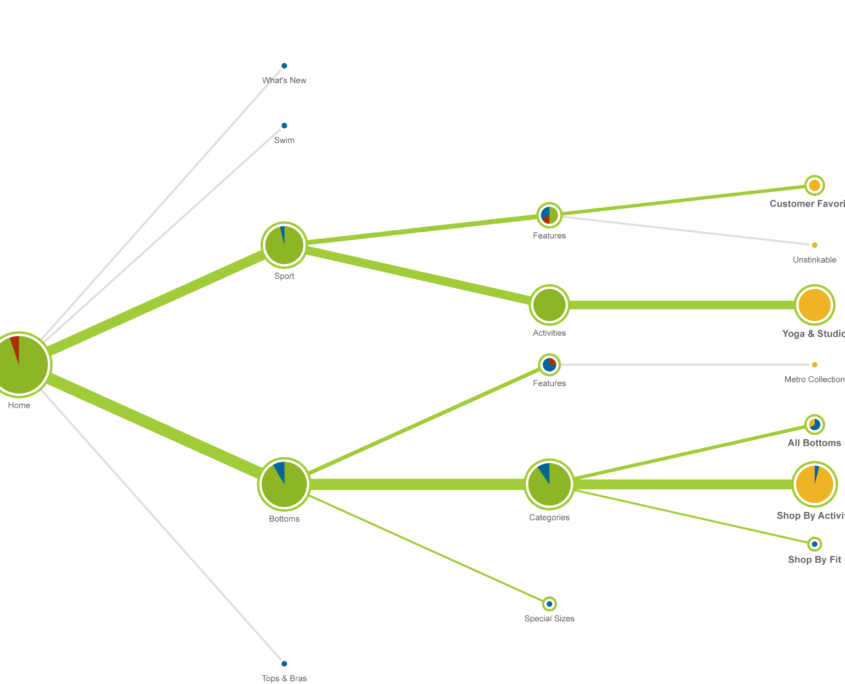

For that reason, a project with the explicit purpose of cleaning up a mobile nav became heavily oriented around user research. I started by divorcing the structure of the navigation from its visual and interaction design, then came up with a list of key tasks to test the most complex or strategically important parts of the IA.

We came out of the first round of research with a baseline of the current design’s performance, as well as some clues as to where the biggest issues lay.

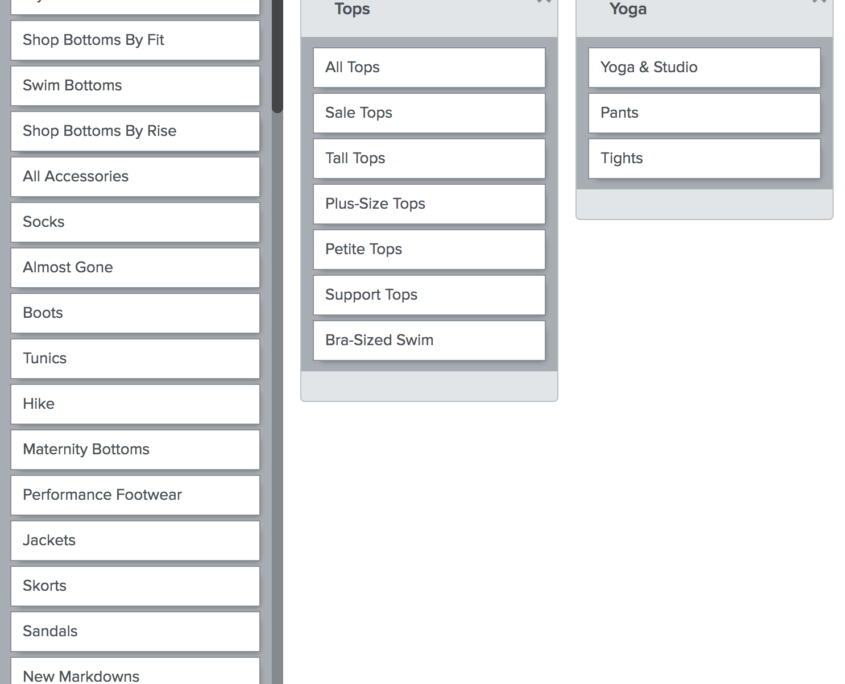

2. Card Sorts and IA Redesign

With a baseline of performance to measure against, I moved into card sort exercises to look for consensus in how customers thought about the range of available products. This was done through a mix of moderated and unmoderated sessions; the former was particularly helpful for getting richer qualitative information about each participant’s thought process, while the latter helped flesh out the degree of consensus across participants.

I used these inputs, as well as a competitive analysis of similar retailers, to inform the design of a new navigation scheme.

3. IA Testing (Round Two)

After reviewing the existing nav and coming up with a new IA, it was time to validate that the proposed changes would lead to the performance improvements the client was looking for. Using the same setup and tasks as the baseline research phase, I compared results for a few specific variables:

- Directness scores

(How often did users have to back out from navigation choices?) - Correctness scores

(How often did users ultimately select a correct navigation option?) - Task completion time

(Regardless of accuracy, how long did it take them to complete the task?)

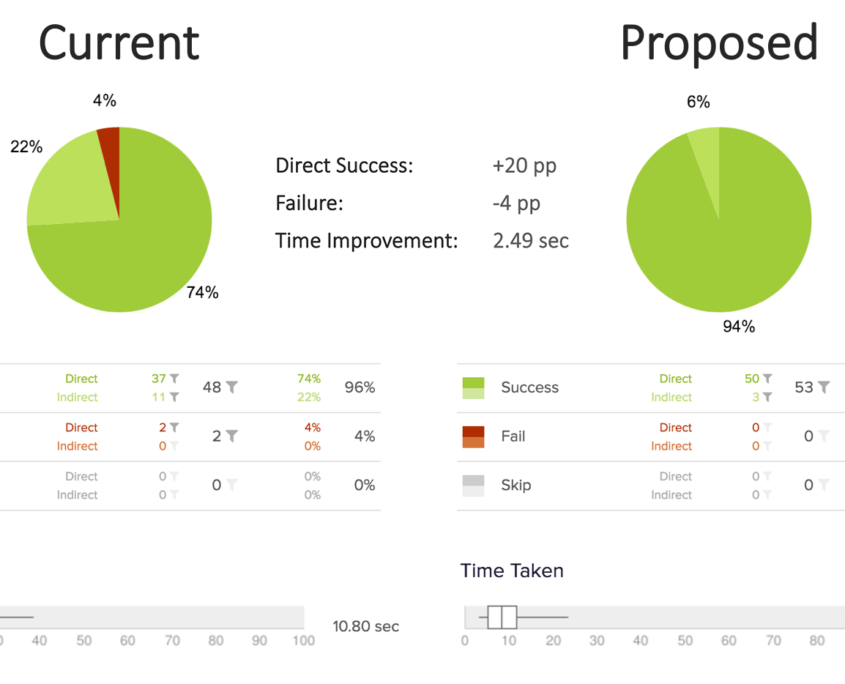

The results, thankfully, spoke for themselves. By cutting out unnecessary layers of hierarchy and improving the information scent for key items, the new IA scores across all tasks averaged:

- +13% Direct successes

- -25% Incorrect responses

- 30% Reduced task completion time

In the case of some major products from the catalog, the figures were even more dramatic. With yoga pants, for example, direct successes improved from 74% to 94%, with a complete elimination of incorrect responses and a substantially shorter task completion time.